YouTube Ingest by Hand (Part 2)

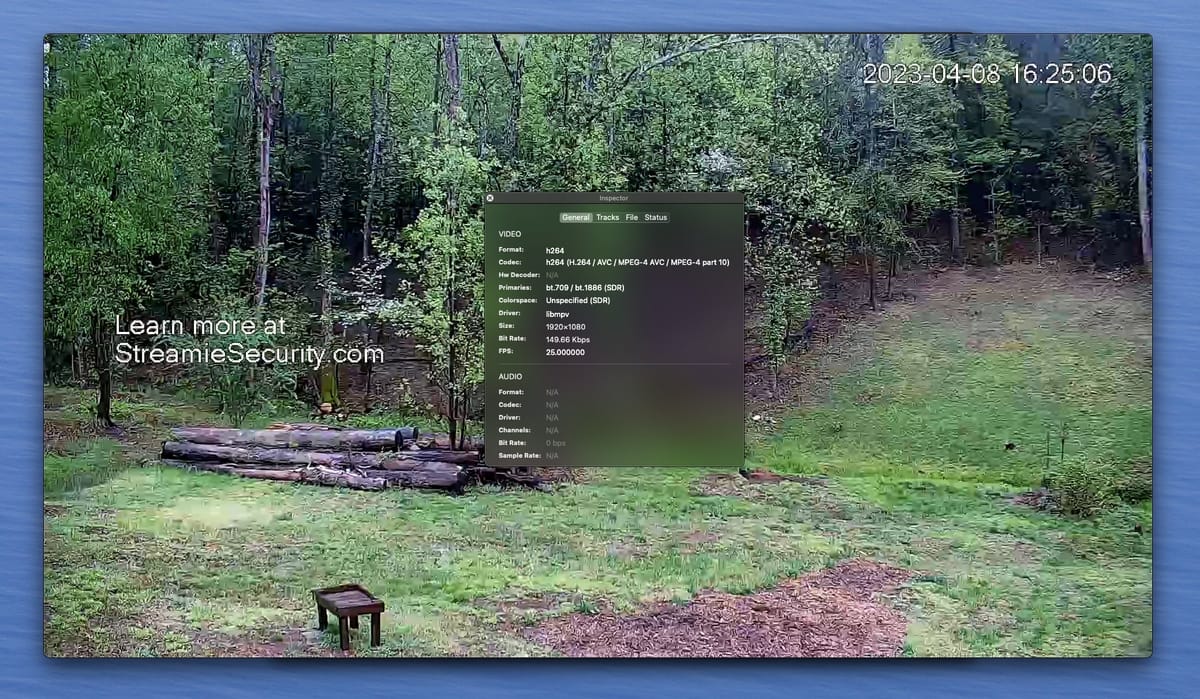

After last night: I was trying to psych myself to manually put the encoded H264 frames into a fragmented MP4 container. I'd need to get some 3rd party tool to validate my work. There was nothing exciting to this "solution". And then I found this:

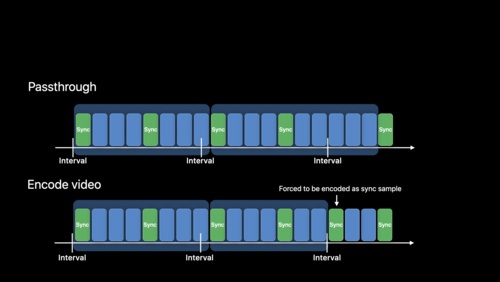

This should have occurred to me earlier, because HLS (Apple's darling) relies on fragmented MP4, so of course they'd add AVFoundation support. I'm already forced to transcode, so the encoder just needs to be configured with ".outputFileTypeProfile = .mpeg4AppleHLS" and maybe a target duration via .preferredOutputSegmentInterval.

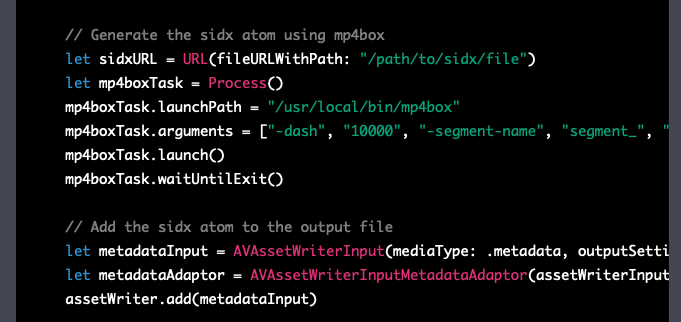

Yesterday I had asked ChatGPT how to write a fragmented MP4 file with AVAssetWriter and it suggested use the command line tool "mp4box" to do all of the actual work, so I drew the conclusion that it was not possible to directly use AVAssetWriter.

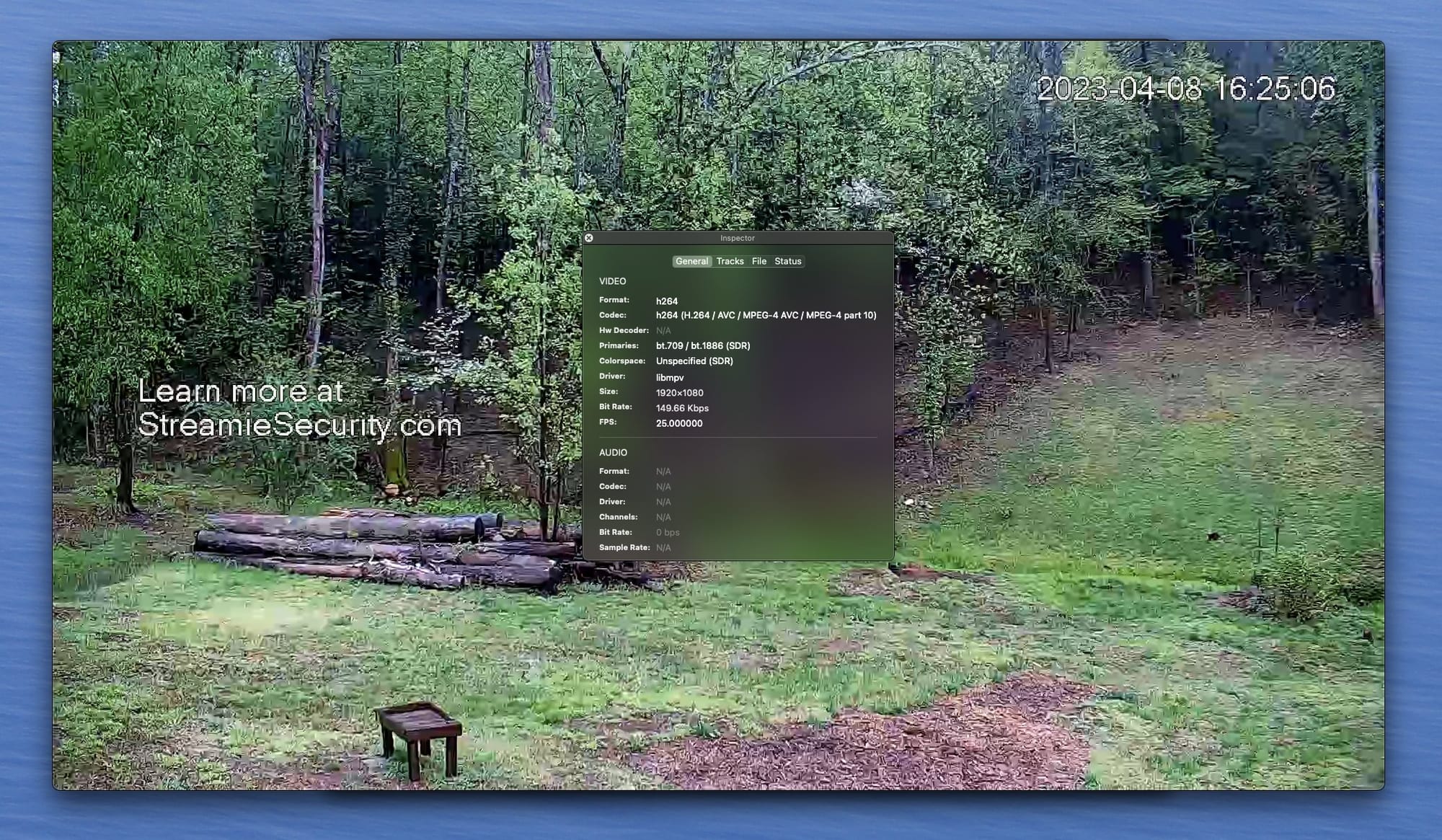

I've got AVAssetWriter producing the initialization segment and the media fragments. @YouTube's MPEG-DASH ingestion still isn't impressed. My next thought is that I need to continuously feed frames to the movie writer, and push the fragments as they're produced.

I've got my new FragmentedMovieWriter class done, but AVAssetWriter won't handle both audio and video, which I guess makes sense (thinking of HLS keeping those separate). But YouTube says that it requires a multiplexed stream. ARRRRGH.

AVAssetWriter supports audio + video for fragmented writing but only when the preferredOutputSegmentInterval is set to .indefinite, which is a strange setting to support for a *fragmented* writing mode. You know, with a single, indefinite fragment...?!

Somewhat surprisingly, if I take the initialization segment and concatenate a handful of subsequent media segments into a single file, it plays just fine. YouTube's ingestion remains unimpressed.

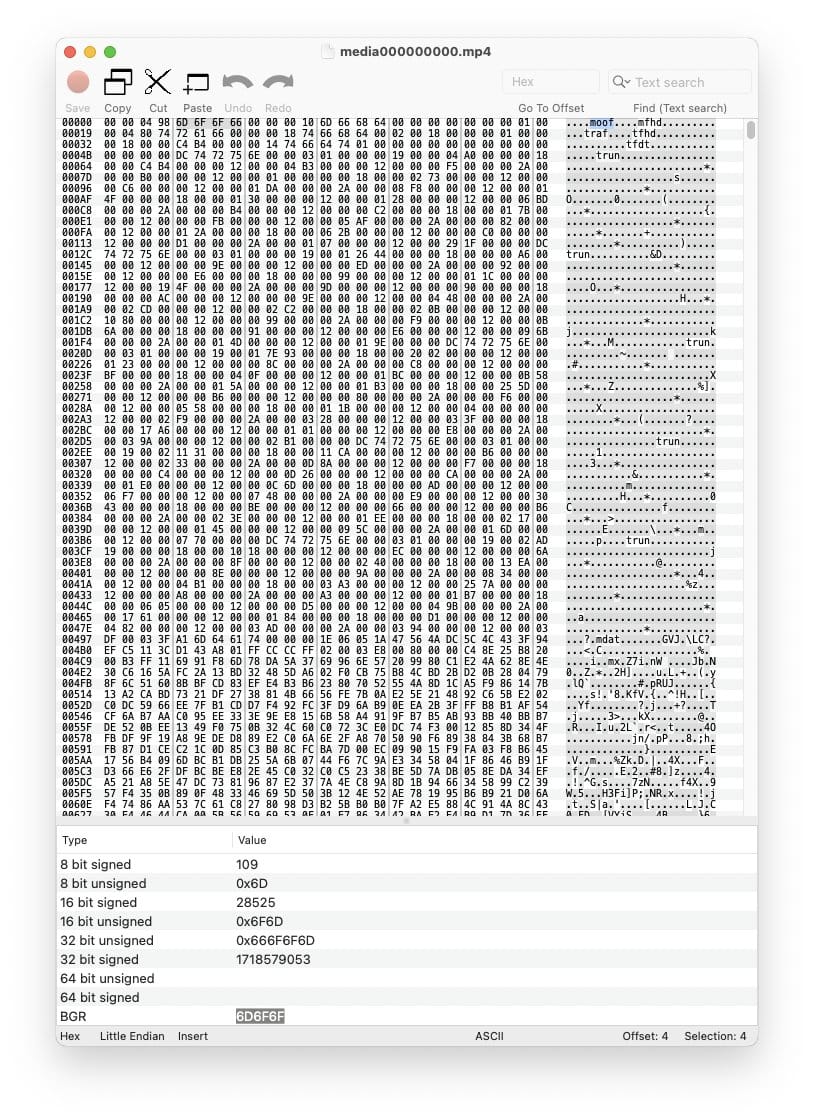

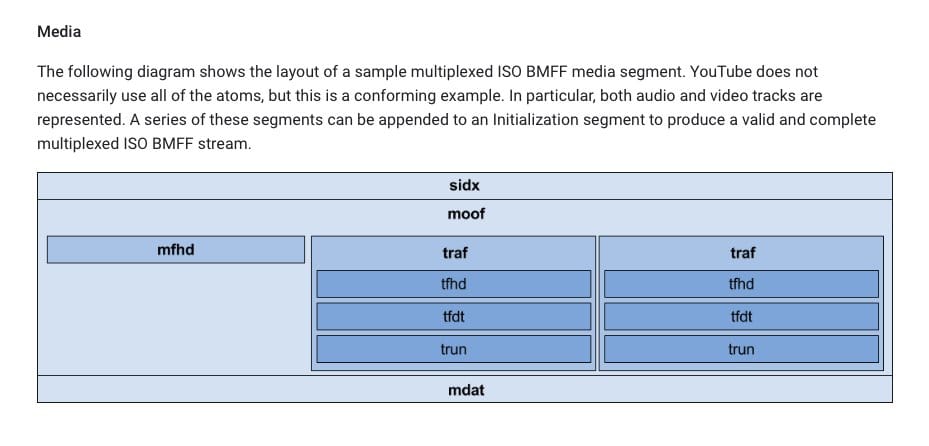

When I 0xED a media file, I can see that it starts with a MOOF atom instead of a SIDX (like YouTube kinda indicates that it wants). Is that important? Can I fabricate this atom myself?

I just had a thought. I've been encoding the ampersands (&) in the MPD as %26 instead of &. If that's actually as wrong as I think it is, that would mean that the ingestion service would never match any of the media files that I'm PUT'ing.

No dice on that.

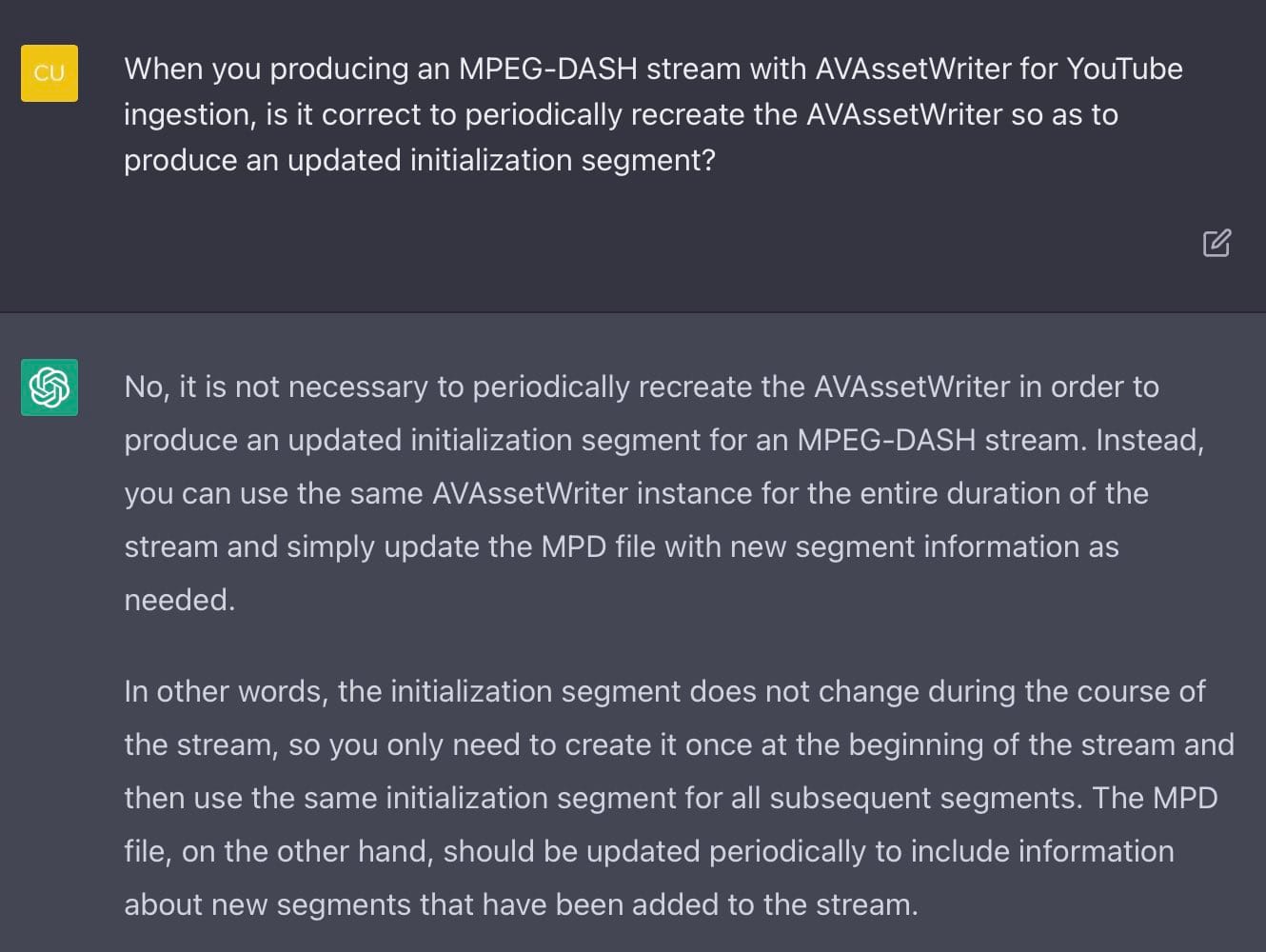

Here's a thing: the fragmented movie writer only produces one initialization segment while YouTube wants an updated one every < 60s. Surely sending the same thing repeatedly has no value, so does that mean I should restart the fragmented movie writer?

Is ChatGPT just telling me what I want to hear? If this is correct, I think I’m finally out of ideas. I mean, the one thing I can’t test is creating and pushing a multiplexed stream. Maybe I’ll look at some example MPDs and segmented media files and look for differences.

To be continued.